Generative AI in numbers

Why do most Gen AI projects and agents deliver no ROI?

Generative AI needs to justify the investment, whether you’re a startup with an innovative idea, a product company seeking differentiation, or a traditional business attempting an AI transformation. Yet, only 5% of AI programs yield great results at scale, according to the MIT research.

No coherent strategy behind AI

Many teams struggle to understand the real capabilities of LLMs. Some leaders get sucked into hype cycles, while others systematically overlook real possibilities. As a result, engineers launch scattershot pilots instead of targeting acute and recurring pains that cost money for the business.

Data foundations being weak or absent

Proprietary data needed for results beyond a simple pilot is often scattered across systems and has no real owner. Critical context about workflows gets lost in email, Slack, WhatsApp, and calls that nobody bothered to record. That's why MindK first gets data and processes in order, so that you don't scale chaos with AI.

Hallucinations that undermine trust

When faulty output creeps into production, employees lose trust in AI systems. The risk is even greater for autonomous agents. To generate reliable output, production systems need grounded retrieval and clear validation. In higher-risk workflows, they also need fallback logic and human review.

Outdated systems AI cannot plug into

Internal, business-critical systems often have no APIs that agents can easily use. In many environments, people still re-enter data by hand. AI only becomes useful when it can retrieve the right data, trigger the right action, and fail safely when conditions are messy.

Security & compliance risks discovered post-factum

AI expands the attack surface, so questions about permissions, retention, auditability, and approval logic must shape the system architecture early. When those questions are deferred, teams end up rebuilding core flows under pressure.

No clear ROI framework for continued investment

Many companies see few gains from pilots because they simply have no mechanism to measure and improve AI performance in the long term. There is no shared definition of success, no baseline for cost or throughput, and no mechanism to connect model behavior to business impact.

Creating AI from scratch

Building AI from the ground up can get expensive fast. Beyond the model itself, eams need data pipelines, retrieval design, evaluation sets, security controls, observability, fallback logic, and integrations with existing systems. MindK helps startups quickly validate their AI ideas with a library of ready-made agents and AI building blocks that reduce initial investments.

Secure architecture

AI governance and control points

Reliability and evaluation

LLM monitoring and cost visibility

Stage 1: Exploration

Despite promising ideas, no hard view exists of where AI can create value. An exploring business doesn't know what data is usable, who owns each workflow, and isn't aware of risks that make a use case harder than it first appears.

What we offer: a 2-day AI case discovery workshop. MindK assesses the readiness of your data, infrastructure, team, and workflows. You get an ROI forecast for the top 3 use cases.

Stage 2: Prototyping

Teams need evidence before committing to a larger build. The main questions are technical and operational. Will the system perform on real data? Where does it break? Is the use case strong enough to justify investment?

What we offer: rapid validation with a proof of concept in 1 to 4 weeks. We test the core workflows to make the next decision clearer.

Stage 3: Production

Prototypes stop being impressive. The real work shifts to implementation, integration, testing, security, observability, and rollout planning. Edge cases start to surface when the system has to operate reliably.

What we offer: production-ready AI with SLA and monitoring. We go all the way from architecture to MLOps setup (CI/CD, monitoring, drift detection, governance).

Stage 4: Scale and optimize

Once the first use case is live, questions appear fast. Teams have to manage quality drift, cost, adoption, governance, model changes, and pressure to expand into adjacent workflows without losing what's already working.

What we offer: monitoring, performance optimization (latency, cost-per-inference, throughput), MLOps maturity (automated retraining, A/B testing, shadow deployment), team enablement.

AI readiness assessment

Duration: 1–2 weeks

We start by assessing workflow fit, data quality, system constraints, security requirements, and internal ownership. The goal is to separate feasible use cases from ideas that still depend on missing data, rules, or unstable integrations.

What you get: risks and blockers, workflow + data dependency maps, security considerations, build-vs-buy guide.

Use case prioritization

Duration: 1 week

Ranking is based on business value, delivery effort, risk, data readiness, and integration complexity. Our consultants explain whether AI is actually the right tool, or whether a conventional workflow, rules engine, or search layer would solve the problem more cleanly.

What you get: use-case shortlist with a value-versus-effort view, initial roadmap.

Architecture design

Duration: 1–2 weeks

We design the model and provider strategy, retrieval, orchestration logic, integration points, access controls, and trace instrumentation. You also get clear evaluation criteria your system has to meet before rollout.

What you get: model, retrieval, and orchestration approach, acceptance criteria, validation build scope.

Validation build

Duration: 1–4 weeks

MindK validates the idea using a focused version built on real or representative data. The point is to test failure modes early, measure performance against the evaluation set, and decide whether the use case is ready for production investment.

What you get: evaluation against agreed criteria, findings on failure modes and design gaps.

Production build, integration, hardening

Duration: 4–10 weeks

We turn the validated design into a production system. You get observability, fallback logic, security controls, deployment pipelines, and version control for prompts, models, and retrieval logic. We also cover rollout planning, rollback paths, quotas, and the operational constraints that prototypes usually ignore.

What you get: production-ready integrations, security, observability, rollout and rollback plan, handoff materials.

Launch, monitoring, continuous improvement

Duration: ongoing

Launch in controlled conditions, monitor quality, latency, failure patterns, and cost, then improve the system based on production traces and human feedback. As usage grows, we extend the operating model to cover model updates, evaluation drift, new workflows, and tighter governance.

What you get: monitoring, production findings, performance & cost baselines, ongoing optimization.

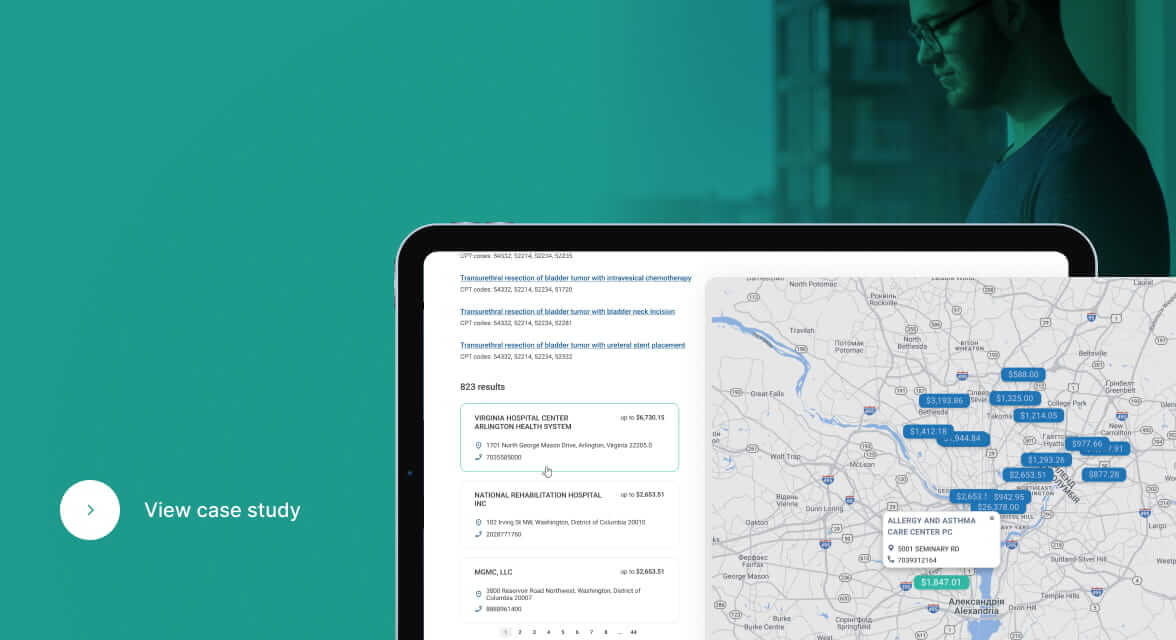

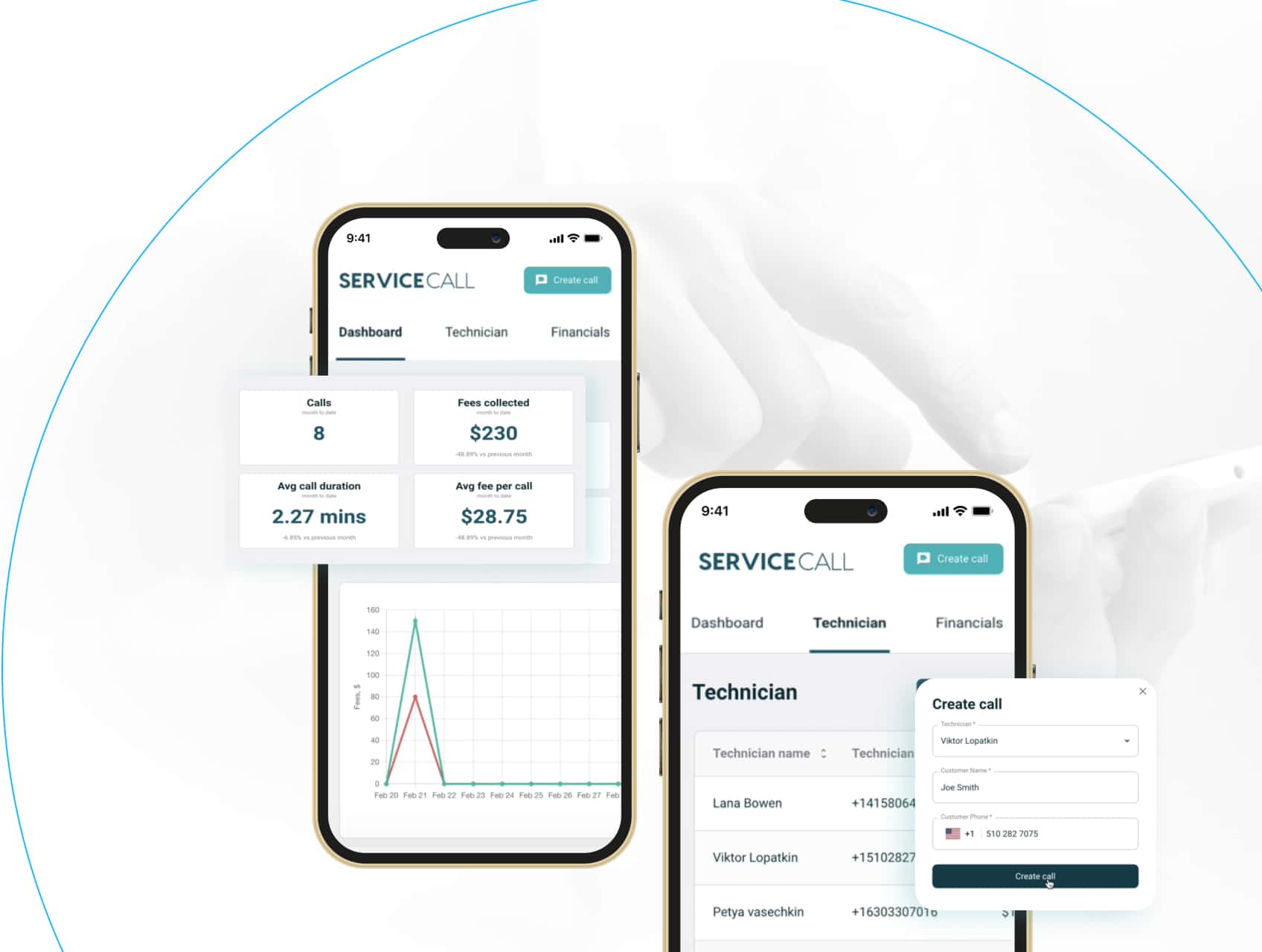

Leverage our library of ready-made AI agents

Prepare your business for AI transformation

Legacy system modernization

Post-vibecode cleanup and hardening

Data preparation and pipeline design

Integration and interoperability

What

our

clients

say

Outcome-first mindset

Every AI we build is designed to solve recurring operational problems and alleviate business pains in production.

Full-stack AI expertise

The work spans strategy, data engineering, workflow automation, cloud-native delivery, APIs, MLOps, and compliance.

Speed without recklessness

Delivery discipline is the priority with expertly crafted architecture, human review, deployment, and support with clear success metrics.

Responsible AI by design

Security, access control, auditability, evaluation discipline, and human oversight are baked into every model and LLM implementation.

Our approach

Request a Generative AI Readiness Assessment

Let us know about your technology challenges and we'll

help you resolve them.

FAQ

- How do we know if we are ready for generative AI?

Start with the workflow, the business pain, the data reality, the integration surface, the owner, and the success metric. If even two of those are vague, the team is usually not ready to build yet. If those are vague, the right first step is assessment and prioritization, not implementation.

- How long does it take to go from an idea to a production-ready system?

That depends on the scope and operating conditions. A narrow implementation can move quickly. A regulated, integration-heavy system takes longer because the hard work sits in workflow design, data quality, testing, and controls.

- Do we need proprietary data to make generative AI useful?

Public models can provide language capability. The business value usually comes from internal data and workflow context. In many cases, the difference comes down to retrieval quality, workflow design, and how well the system fits into the rest of the stack.

- Can AI be integrated into existing software, or do we need to rebuild?

In many cases, AI can be integrated through APIs, middleware, and event-driven connections rather than a full rebuild. The right answer depends on how brittle the current systems are and where the real bottlenecks sit.

- How do you reduce hallucinations in production systems?

Our generative AI consultancy makes the output trustworthy via grounded retrieval, better context assembly, structured outputs, evaluation, fallback paths, permission-aware tool use, and human review, where mistakes carry real cost.

- How do you keep proprietary data secure when using LLMs?

At MindK, security starts at the architecture level. We design permissions, encryption, redaction, logging, and access boundaries around the actual exposure risks of the workflow. Auditability is part of that design itself.

- How much does generative AI consulting cost?

Cost depends on scope. A focused readiness engagement is very different from a production build with multiple integrations, governance requirements, and ongoing optimization. The practical way to estimate cost is to scope the workflow first, then map the dependencies, the integration surface, and the quality bar.

- How does your Gen AI consulting company measure ROI?ve AI project?

Start with a baseline. Then measure the operational shift the system is supposed to create. That could mean shorter cycle times, fewer manual touches, faster resolution, lower error rates, better conversion, or higher throughput. Tie the technical evaluation to a business KPI early.

- Can you work with our current stack and cloud environment?

Usually yes. In most cases, the goal of our LLM consulting services is to work with current systems where possible and modernize where the blockers are real.