5 years ago we started working with a leading US company that helps businesses improve their online reputation. Our DevOps team was first invited to perform infrastructure maintenance. Yet, it soon became apparent that the project required some major reworks, starting with a microservice architecture.

Company

Our client is one of America’s leading reputation management companies with over $100 million in funding.

The challenge

The company had a decade-old legacy system for tracking online reputation. The solution had what we call “spaghetti code”, making it very difficult to maintain. It used Ansible for provisioning and configuration management, which didn’t cover the whole infrastructure.

For a long time, our DevOps engineers have been supporting the project infrastructure. Most of the tasks were done manually so solving even trivial problems took a lot of time. Releases were unstable and unpredictable. New versions were delivered using the builds with Debian packages. This slowed down the build and delivery process.

It was impossible to update the operating system or libraries as some of them have reached the end of support. Some system components were configured without taking into account fault tolerance and scalability. There was no CI/CD pipeline in place, all processes were launched from a local machine. As a result of these challenges, introducing a new DevOps engineer to the project took a lot of time.

The client was overpaying for infrastructure. Their services were scattered over a large number of on-demand instances that didn’t use Amazon’s cost-saving features. What’s more, testing environments were operating 24/7, forcing the client to pay for computation power even during downtime.

The solution

Faced with many challenges, our team decided to migrate the project to a microservice architecture. We started by analyzing the entire process of building Debian packages and third-party services.

Our engineers implemented an automated CI/CD pipeline in Github Actions. The pipeline builds separate containers for every product component and loads them to a Docker registry. ArgoCD streamlined the continuous delivery process, making it much more predictable for developers.

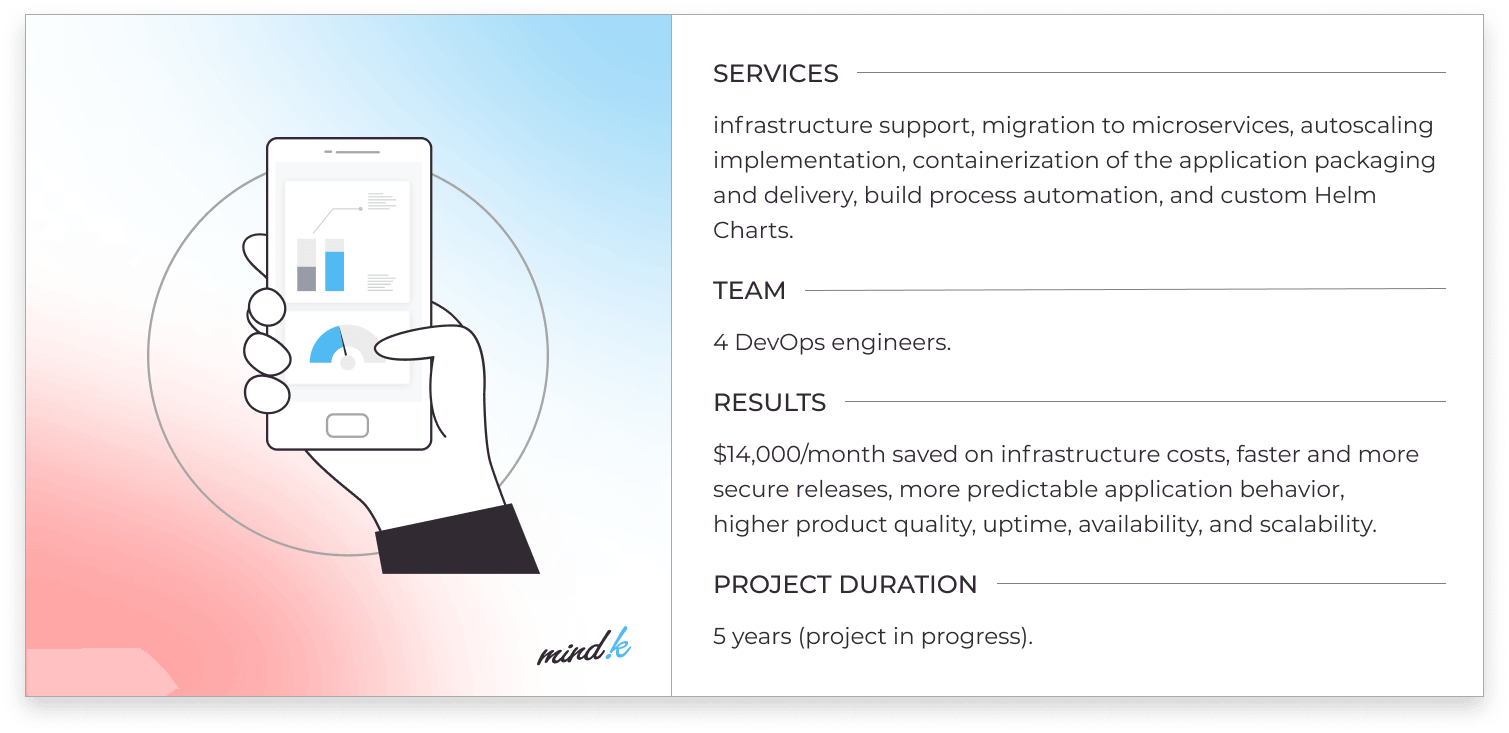

Over 5 years, our DevOps engineers introduced the following improvements:

- Optimization of infrastructure costs. We implemented autoscaling and AWS working hours scheduler to switch off testing environments during downtime. We also combined some of the services that didn’t need constant computation power into a single instance to save on infrastructure costs.

- Migration to microservices (Docker containers, Kubernetes cluster, and Helm Charts for some of the product components).

- Improving the build process (migration from Jenkins builds to GitHub Actions).

- Kubernetes HPA (horizontal pod autoscaling) and AWS autoscaling groups.

- Improving configuration and provisioning. Migration from Ansible (launched locally and manually) to Docker containers and Kubernetes cluster, hosted on AWS Elastic Kubernetes Service (EKS).

- Custom Helm Charts for microservices and Bitbucket pipelines, which allows the deployment of database services and test environment queues to save costs.

Business value

We successfully migrated the project to microservices architecture. Our client now saves around 14,000 USD per month as a result of infrastructure optimization. The improvements by the DevOps team made our releases more predictable, secure, and faster. The testing process became more predictable and accessible to the development team. This makes it possible to catch more bugs before the release. The microservice architecture allows us to make rolling updates of components while maintaining high system uptime. This has improved the app’s availability and scalability.

These improvements can be easily replicated by using our proven DevOps tools and best practices. So if you want faster time to market, better product quality, and lower operational costs – check out how MindK can help you with our DevOps services.